Vibe Coding Is Dead. Long Live Structured AI Development

on Ai, Software development, Coding, Productivity, Llm

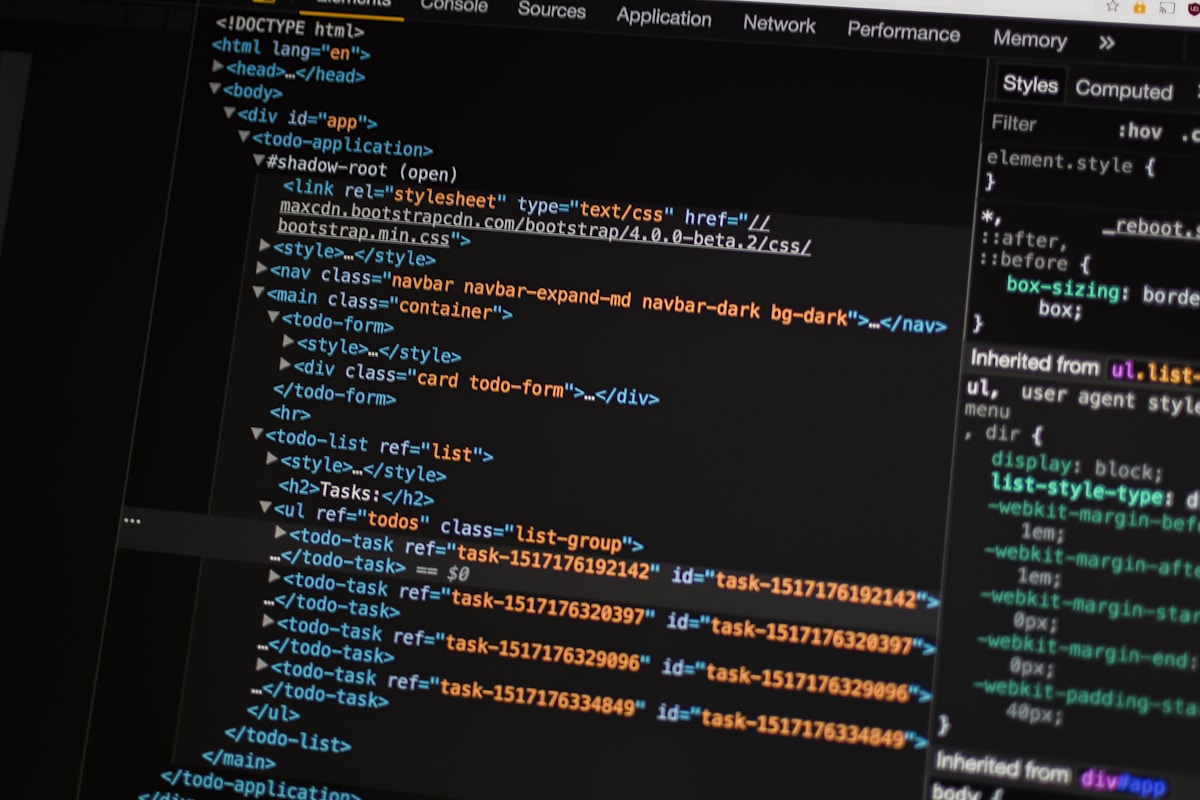

In early 2025, the term “vibe coding” swept through developer Twitter. The pitch was irresistible: stop worrying about the details, just describe what you want, and let AI figure out the implementation. Ship fast. Iterate faster. Who cares if you don’t fully understand every line?

Fourteen months later, the bill has come due.

Teams that leaned into pure vibe coding are now dealing with codebases that nobody understands, security vulnerabilities introduced by AI-generated code, and technical debt that would make a 2010s PHP monolith blush. The honeymoon phase is over, and a more nuanced picture of AI-assisted development is emerging.

This isn’t a “AI coding tools are bad” post. They’re not. They’re extraordinary. But the way we use them matters enormously, and the field is maturing past its initial infatuation.

What Went Wrong with Pure Vibe Coding

Let me be concrete about the failure modes, because they’re instructive.

The “working but wrong” problem

AI-generated code often works — it passes tests, it produces output — but does the wrong thing in subtle ways. A payment processing function that handles normal cases correctly but silently drops transactions during certain edge conditions. An auth flow that works 99.9% of the time but has a race condition that only appears under load.

Vibe coding, by its nature, doesn’t encourage the deep understanding needed to catch these issues. If you don’t understand the code, you can’t write tests that cover the edge cases, and you can’t review it for correctness.

Context window amnesia

Long vibe coding sessions have a fundamental problem: the AI loses context. You built a feature in session 1. You added to it in session 2. By session 5, the AI is making suggestions that contradict architectural decisions made earlier, because it doesn’t remember them.

Without explicit documentation of decisions, constraints, and architecture, AI-assisted sessions become increasingly incoherent.

Security debt accumulation

Security vulnerabilities in AI-generated code tend to cluster around a few patterns: SQL injection (AI uses string formatting instead of parameterized queries), insecure deserialization, missing input validation, and hardcoded credentials. None of these are exotic. But a developer who doesn’t read the generated code carefully won’t catch them.

What Structured AI Development Looks Like

The teams doing this well in 2026 have evolved a set of practices that preserve the speed advantages of AI assistance while maintaining code quality and security.

1. Specification-First Development

Before touching an AI coding tool, write a specification. Not a novel — a structured document that covers:

# Feature: User Profile Photo Upload

## Context

- Users can upload profile photos

- Photos are stored in S3, URLs in PostgreSQL

- Current stack: Next.js 15, Prisma, AWS SDK v3

## Requirements

- Max file size: 5MB

- Accepted formats: JPEG, PNG, WebP

- Photos must be resized to 200x200 for avatar display

- Original stored at full resolution

- Old photo must be deleted from S3 when replaced

## Constraints

- Must work within existing auth middleware

- Use existing S3 bucket (env: PROFILE_PHOTOS_BUCKET)

- No new dependencies without discussion

## Out of scope

- Photo cropping UI (future ticket)

- Video support

This document becomes the prompt context AND the review checklist. If the AI generates code that doesn’t satisfy each point, you catch it during review.

2. Incremental Generation with Review Gates

Don’t ask AI to generate an entire feature at once. Break it into units:

- Generate the data model / schema changes

- Review and approve

- Generate the service layer

- Review and approve

- Generate the API endpoint

- Review and approve

- Generate tests

Each review gate is a forcing function for understanding. You can’t review what you don’t understand — and if you don’t understand it, that’s a signal to ask questions or simplify.

3. Architecture Decision Records (ADRs) as AI Context

Architectural decisions that exist only in engineers’ heads are invisible to AI coding tools. The solution is ADRs — short documents that capture key decisions:

# ADR-047: Use Background Jobs for Email Sending

## Date: 2026-01-15

## Status: Accepted

## Context

Email sending is slow (200-500ms) and occasionally fails. Doing it

synchronously in request handlers degrades response times and causes

transaction rollbacks on email provider failures.

## Decision

All emails are sent via background jobs using BullMQ. The API handler

enqueues the job and returns immediately. Job workers handle retries.

## Consequences

- Response times are unaffected by email provider latency

- Emails may be delayed by seconds under high load

- Need monitoring for job queue depth

- Email sending is eventually consistent (not guaranteed same-request)

When starting an AI coding session, relevant ADRs go into the context. Now the AI knows: “Don’t add email sending to request handlers. Use the job queue.”

4. Security Checklists That Run on AI Output

OWASP maintains excellent checklists, but reviewing AI output against a 100-point checklist is impractical. Instead, create a short, project-specific list of the vulnerabilities most likely to appear in your codebase:

# AI Code Review Security Checklist

## Database queries

- [ ] All user input uses parameterized queries / ORM methods

- [ ] No raw string concatenation in SQL

## Authentication

- [ ] New endpoints have auth middleware applied

- [ ] Permissions are checked, not just authentication

## File operations

- [ ] File paths are validated and canonicalized

- [ ] Uploaded files are validated by content, not just extension

## Secrets

- [ ] No hardcoded API keys, passwords, or tokens

- [ ] All secrets reference environment variables

## Input validation

- [ ] User-supplied data is validated before use

- [ ] Error messages don't leak internal details

Five minutes with this checklist on AI-generated code catches 90% of the security issues before they merge.

The Tools That Enable Structured AI Development

The coding tool landscape has consolidated significantly. A few standouts for structured development:

Cursor with .cursorrules — Cursor’s rules file lets you encode project-specific constraints that apply to every AI interaction:

# .cursorrules

## Architecture

- This is a Next.js 15 App Router project

- Use server components by default; client components only when needed

- All database access goes through the repository layer (src/repositories/)

- Never call Prisma directly from API routes or components

## Code style

- TypeScript strict mode is enabled; no `any` types

- Error handling uses Result<T, E> pattern (see src/lib/result.ts)

- All async functions must handle errors explicitly

## Security

- Never log user PII

- Always use parameterized queries through Prisma

- Input validation uses Zod schemas

These rules ensure that every generation starts with your project’s constraints baked in.

Claude with extended thinking for complex problems — For architectural decisions, algorithm design, or debugging complex issues, extended thinking produces noticeably better results than standard generation. The tradeoff in speed is worth it for consequential code.

Continue.dev for IDE integration — The open-source alternative to Copilot that lets you bring your own models. The ability to use different models for different tasks (fast model for completions, powerful model for code review) is increasingly valuable.

Metrics That Actually Matter

Vibe coding maximizes for one metric: lines of code generated per hour. That’s the wrong metric.

Mature AI development teams track:

- Code review iteration rate — How many rounds of review before merge? AI-generated code that requires many review cycles isn’t actually saving time.

- Post-merge bug rate — Are bugs in AI-generated code different from human-written bugs? Often, yes — they cluster in specific patterns.

- Comprehension ratio — Can the engineer who merged a PR explain how it works a week later? If not, that’s a codebase timebomb.

- Context quality — Are AI sessions starting with sufficient context? Poor context → poor output.

The Human Element

Here’s the uncomfortable truth that the vibe coding hype glossed over: AI coding tools amplify the engineer using them, for better or worse.

A senior engineer who deeply understands the problem space, architecture, and security implications uses AI to generate code they could write themselves — faster. They review output critically. They catch mistakes. The output is good.

A junior engineer who doesn’t yet have that foundation uses AI to generate code they don’t fully understand. They can’t review it effectively. Mistakes slip through. The output is risky.

This isn’t an argument against junior engineers using AI tools — it’s an argument for mentorship structures that teach code review skills alongside prompt engineering skills. The ability to critically evaluate code matters more than ever when the rate of code generation has increased 10x.

The Path Forward

The evolution is clear: vibe coding was the “move fast and break things” of AI development. Structured AI development is the grown-up version.

The best teams in 2026 haven’t abandoned AI — they’ve harnessed it properly:

- Specification first, code second

- Incremental generation with mandatory review

- Architecture decisions documented and fed as context

- Security checklists applied to every AI output

- Metrics that measure quality, not just velocity

The developers who thrive in this era aren’t the ones who give AI the most control. They’re the ones who know how to direct it precisely, review it critically, and maintain the judgment to know when to write code themselves.

Vibe coding is dead. Structured AI development is what comes next.

Recommended: Continue.dev documentation, OWASP AI Security, Cursor rules guide

이 글이 도움이 되셨다면 공감 및 광고 클릭을 부탁드립니다 :)