Agentic AI in Software Engineering: When Your AI Writes, Tests, and Deploys Code

on Ai, Agentic ai, Software engineering, Automation, Llm

Something quietly crossed a threshold in 2025: AI stopped being a tool that helps developers write code and started becoming a system that does software engineering. Not autocomplete. Not a smarter Stack Overflow. An agent — one that reads your codebase, plans changes, writes tests, runs them, debugs failures, and opens a pull request.

This is agentic AI, and it’s reshaping what it means to be a software engineer.

What “Agentic” Actually Means

The word gets thrown around loosely, so let’s be precise. An agentic AI system is one that:

- Perceives its environment (reads files, runs commands, browses docs)

- Plans a sequence of steps to achieve a goal

- Acts — calling tools, writing code, executing tests

- Observes results and adjusts

- Iterates until the task is complete or it asks for help

This is fundamentally different from a chatbot that generates code when prompted. The agent runs in a loop. It has memory across steps. It takes initiative.

The most visible examples right now: OpenAI’s Codex agent, Anthropic’s Claude with extended tool use, Devin from Cognition, and the open-source SWE-agent from Princeton. Each can take a GitHub issue as input and output a working pull request — sometimes without any human intervention.

The Stack Behind an Engineering Agent

Understanding how these agents work helps you use them effectively — and build your own.

1. The Loop

Every agent runs some variant of ReAct (Reason + Act):

Observe → Think → Plan → Act → Observe → ...

In practice, this is a prompt that includes the agent’s current observations, a chain-of-thought step, and a tool call. The model outputs JSON (or structured text), a tool runner executes it, and the result feeds back into the next prompt.

# Simplified agent loop

while not task_complete:

observation = environment.get_state()

thought = llm.think(observation, task, history)

action = llm.plan_action(thought)

result = tools.execute(action)

history.append((thought, action, result))

task_complete = llm.check_done(result)

2. The Tool Set

What an engineering agent can do depends on its tools. A well-equipped agent typically has:

| Tool | Purpose |

|---|---|

read_file | Read any file in the repo |

write_file | Create or modify files |

bash | Run shell commands (tests, linters, builds) |

search_codebase | Semantic search across the repo |

web_search | Look up docs, error messages |

git | Commit, branch, diff |

The combination of bash + write_file is especially powerful: the agent can write code, run it, see the error, fix the code, and run it again — the same cycle a human developer uses, at machine speed.

3. Context Management

The biggest practical challenge is context. A large repo might have millions of tokens of code, far more than any model can hold at once. Good agents use:

- Semantic indexing (embeddings) to retrieve only relevant files

- Hierarchical summarization — high-level repo map + detailed focus on the task area

- Scratchpad memory — a running notes file the agent updates as it works

Real Workflows That Work Today

Let me get concrete. Here are three engineering workflows where agentic AI is genuinely production-ready in 2026:

Bug Fixing from Issue

Input: GitHub issue #1234 — "NullPointerException in UserService.getProfile()"

Agent:

1. Reads issue, extracts stack trace

2. Locates UserService.java, reads surrounding context

3. Identifies missing null check before line 87

4. Writes the fix

5. Runs existing unit tests — 3 fail

6. Reads failing tests, understands expected behavior

7. Adjusts fix

8. All tests pass

9. Opens PR with description linking to issue

This is not hypothetical. Teams at several mid-size startups report that 30-40% of their bug fix PRs in Q1 2026 were opened by agents, with humans reviewing and merging.

Code Migration

Large-scale migrations — updating an API, changing a library, renaming a concept throughout a codebase — are ideal for agents. They’re tedious for humans, involve no creative judgment, and follow clear patterns.

Agents can:

- Enumerate all affected files

- Apply consistent transformations

- Run type checks and tests after each batch

- Produce a migration report

What used to be a 2-week sprint can become a 2-hour agent run.

Documentation Generation

Agents that can read code and write are naturally good at keeping docs in sync with implementation. An agent triggered on every merge can:

- Detect changed public APIs

- Update docstrings and README sections

- Generate changelog entries

- Ensure examples still compile

The Human’s New Role

If agents handle implementation, what do engineers do?

The honest answer: engineering shifts up the abstraction stack.

Before: Architect → Spec → Engineer writes code → QA tests → Deploy

After: Architect → Spec → Agent writes code → Engineer reviews → Deploy

The engineer becomes the reviewer, the architect, and the exception handler. You need to:

- Define tasks clearly enough for an agent to execute them

- Review agent output with a critical eye (agents make confident mistakes)

- Handle the genuinely novel problems agents can’t tackle

- Design systems for agent-friendliness (clear interfaces, good test coverage)

This is a real skill shift. Junior developers who relied on implementation practice to learn may find the learning curve changes — while senior engineers who were bottlenecked on implementation may suddenly be 10x more productive.

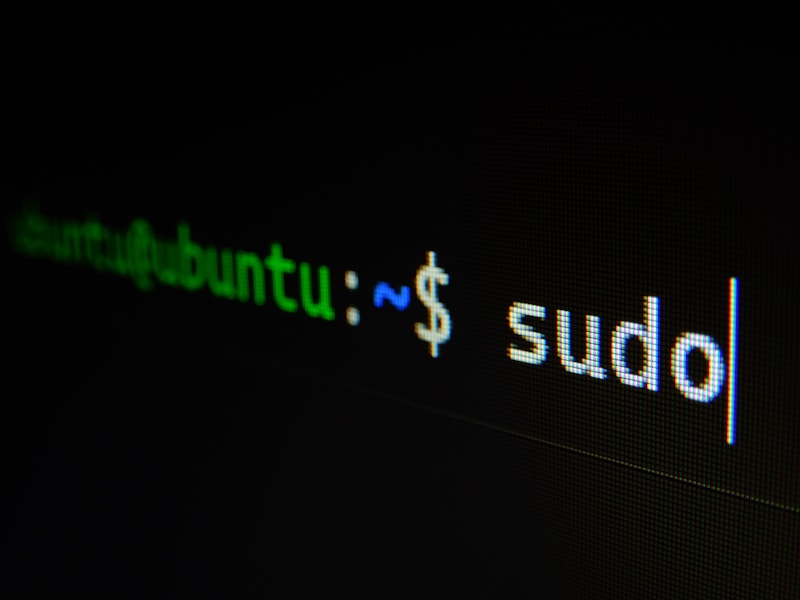

Photo by Mohammad Rahmani on Unsplash

Where It Breaks Down

Agentic AI is impressive but not magic. Current failure modes:

Context amnesia: Agents working on large tasks sometimes “forget” earlier decisions and contradict themselves 20 steps later. Mitigation: structured scratchpads and explicit checkpointing.

Test-passing ≠ correct: An agent that writes both the code and the tests can make them agree with each other while both being wrong. Always keep a suite of human-written integration tests the agent cannot modify.

Security blind spots: Agents often copy patterns from training data, including insecure ones. Automated security scanning (SAST) on every agent PR is non-negotiable.

Scope creep: Agents given broad goals sometimes make changes far outside the intended scope. Use sandboxed repos, explicit file allowlists, and careful task descriptions.

Getting Started: Building Your First Engineering Agent

You don’t need to build Devin from scratch. Here’s a practical path:

Week 1: Use existing agents

- Try Claude with extended thinking and tool use on a real bug in your repo

- Use GitHub Copilot Workspace for a small feature

- Observe where they succeed and fail

Week 2: Build a simple agent with LangGraph or Autogen

from langgraph.graph import StateGraph

from langchain_anthropic import ChatAnthropic

# Define your tools

tools = [read_file, write_file, run_bash, search_repo]

# Build the graph

graph = StateGraph(AgentState)

graph.add_node("think", llm_with_tools)

graph.add_node("act", tool_runner)

graph.add_edge("think", "act")

graph.add_edge("act", "think") # Loop until done

Week 3: Deploy on real tasks

- Start with low-risk tasks: dependency updates, formatting, doc generation

- Build a feedback loop: log every agent run, review outputs, tune prompts

The Bottom Line

Agentic AI in software engineering is past the hype phase. It’s in the “awkward teenager” phase — clearly capable, occasionally brilliant, sometimes infuriating, and getting better every month.

The engineers who thrive in the next five years won’t be the ones who resisted agents. They’ll be the ones who learned to work with them: directing them effectively, reviewing their output critically, and building systems designed for both human and AI collaboration.

The compiler didn’t replace programmers. Neither will the agent. But just as programmers who embraced compilers outcompeted those who didn’t, the same dynamic is playing out now — at a much faster pace.

Start building with agents today. Your future self will thank you.

이 글이 도움이 되셨다면 공감 및 광고 클릭을 부탁드립니다 :)